Combinatorics for Cats

Probability for Pussies

Functions for Felix

Featuring assorted other fluffy creatures.

![[Teacher Cat]

This site will work just fine without images.

But you'll be missing the Cat-Toons.

And the maths may be a bit harder to read.](01_TutorCat.png)

Combinatorics for Cats

Probability for Pussies

Functions for Felix

Featuring assorted other fluffy creatures.

![[Teacher Cat]

This site will work just fine without images.

But you'll be missing the Cat-Toons.

And the maths may be a bit harder to read.](01_TutorCat.png)

(c) Vitenka 2006

Let's start right at the beginning.

In the beginning

![[circle]](11_Circle.png)

The earth was without form.

And void.

Then someone drew a cat.

![[AngelCat -- Get on with it!]](12_AngeliCat.png)

You can tell it's a cat, because the author says that it is.

That's an assertion. You have to take them on trust, until you've seen them do enough useful things that you're convinced.

![[DevilCat -- That's Bad]](13_DevilCat.png)

![[FatCat -- What is probability for?]](21_FatCat.png)

Curiously, my curious cat asks an important question.

![[AnvilSquishedCat. (narrator) -- Curiosity killed the... Well, actually the anvil did this time.]](22_SquishedCat.png)

It does lots of stuff.

Mainly it lets you answer the question "What're the chances?"

Which is important for lots of stuff.

![[GeneriCat -- Such as?]](23_GeriCat.png)

Such as making new silicon chips, or planning the best place for a housing estate.

![[Dog -- Or winning at cards]](23_CardDog.png)

Or gambling. Probability was invented for gambling.

It's time to play the tupenny stalls at the church fete. Pretend that you're enjoying yourself.

![[CatMitBalon -- Whee.]](31_CatWithBalloon.png)

One stall is offering to pay out to anyone who can roll a double six.

![[Two dice, sixes up]](32_TwoDice.png)

Another pays the same amount to whoever can pull an ace out of a pack of cards.

![[An Ace]](34_Ace.png)

Which game would it be better for you to play?

Why?

![[GamblingCat -- Come on... Baby needs a new flea collar...]](33_GamblingCat.png)

Well, this one is easy. Grab some dice. Grab a pack of cards.

Which one pays out more often?

... why are we waiting? Why-eye are we waiting...

... come on. Roll them dice. Draw a card then shuffle and do it again. Come on! ...

Ok. So it looks like the cards pay out about three times as often as the dice do.

![[Dogs playing poker]](41_PokerDogs.png)

But sometimes the cards don't pay out for a while. And sometimes the dice pay out two or even three times in a row.

![[Fox -- That's called luck.]](42_Fox.png)

Probability can't tell you what will happen.

![[SpyCat -- Only cheating can do that]](43_SpyCat.png)

It just says how likely it is to happen.

And it mainly does this by saying what happens in the long run.

![[ScoutCat -- *gasp* / *wheeze* / *etc.*]](44_ScoutCat.png)

Right. Time for one of those assertions.

The chance of 'n' equally likely outcomes is:

![]() - Pronounced 'one over enn'

- Pronounced 'one over enn'

Oh! Sorry. We need a scale.

![[Fish -- Eep!]](52_Fish.png)

Some people like percentages.

![[MagiCat(percentages) -- 10% yes, yess / 50% mmmmm / 78.2% Ohhh yes!]](53_MagiCat.png)

Those people are weird.

Some people like ratios.

![[Pony -- Two to one, four to three against]](54_Pony.png)

Those people lose all their money down the track.

We're mathematicians.

![[SoldierCat -- I'm a cat! / Sort of.]](61_SoldierCat.png)

Well, we're pretending to be.

We're going to have a scale of one down to zero.

![[Fish. Fishbones on a plate. FatCat -- *burp*]](62_Fish.png)

One is the top. Something with a probability of one is certain to happen. (Same as 100%. And there's no way to write that in betting language.)

Something with a probability of zero can never happen. (0%) And again...

![[Pony -- Sorry. / Horses are dumb.]](63_Pony.png)

A probability of ½ is 50%, or 1:1. It happens half the time.

Right. Lets convince ourselves that our one-over-n rule is sensible.

![[Box1 -- ? Box2 -- ? Box3 -- ? (*)]](71_Boxes.png)

I hid a cat in one of these boxes.

There's no way to tell which one I hid the cat in, each is equally likely.

If you open one (without picking them up, or shaking them, or listening for mewing or anything) - what are the chances that you will get your face clawed off by an angry escaping cat?

Well - it's one in three. 3:1 or 33.33333...% - or 1/3.

Did you pick right?

Pick up your face and we'll move on.

![[SadCat -- *Psst! / I'm in box 2.]](73_SadCat.png)

Let's convince ourselves.

![[LogiCat -- With the power of logic!]](81_LogiCat.png)

First of all, all the probabilities must add up to one.

If we open all of the boxes, we certainly opened the one with the cat in.

![[Box1 -- ? Box2 --?+SadCat -- See? Box3 -- ?]](82_Boxes.png)

(That's a rule - but it's also kind of obvious. We have to open one of the boxes, and a certainty has a probability of 1. Also, think what happens if you only have one box...)

But we also said that each box was equally likely to be chosen.

So we've got two things known.

Oh, and one thing more.

![[LogiCat -- Hold on to your maths, because here we go!]](83_LogiCat.png)

Let's write this down. We like symbols in maths - so.

We'll write the probability that the cat is to be found in box one as:

![]()

And so on.

![]()

Then we can write:

![[Eqn. p1 + p2 + p3 + ... + pn = Sigma(sup)n(sub i=1) pi = 1]](93_Enq.png)

And ![]()

![[LogiCat(forall variant) -- This bit's my fault.]](95_LogiCat.png)

![]()

A little bit of maths sorts this out for us.

![[TinyLogiCat -- A very little bit....]](97_LogiCat.png)

![[Eqn. p2 = p1, p3 = p1. etc.

Implies p1 + p2 + p3 + ... + pn = p1 + p1 + p1 + ... + p1

Implies n.p1 = 1

Implies p1 = 1/n

p2 = p1. p3 = p1. etc.

Implies pi = 1/n

Implies p2 = 1/n, p2 = 1/n, etc.]](98_Eqn.png)

![[TinyMagiCat -- Ta da!]](99_MagiCat.png)

So. There are 52 cards in a pack.

![[JokerCats(2) -- Not counting us]](101_JokerCat.png)

![[FatCat -- Until you lose some]](102_FatCat.png)

So the chance of choosing any particular one of them

![[QueenOfHeartsCat -- Me!]](103_QueenCat.png)

must be 1/52.

There are four aces. So the chances of drawing one should be:

![]()

Roughly one time in thirteen you will draw an ace.

Ok, I'm going to abandon percentages and horsey rules entirely from now on.

![[MagicCat + Pony -- Awww...]](111_MagiCat.png)

Percentages. They range from 100% meaning completely true, to 0% completely false, nice and linear (50% is half true)

That's easy then. Multiply the 0-1 number by 100, and it's a percentage.

Pack your bags, percentage lovers - you're outta here.

![[MagicCat -- Sorcerous Felix Cattus exeunt stagius sinister. Magically, even.]](112_MagiCat.png)

Right. Ratios are a bit more complicated, and because I hate them I'm not even going to justify myself.

Expressed as a probability, a:b (against) = b / (a+b)

( 3:1 on is the same as 1:3 against = 3/4 )

(e.g. 2:1 = 1/3, 5:4 = 4/9 )

![[Pony -- Lost my shirt

GeneriCat -- off to the glue factory.

Pony -- That's Bad. ]](113_Pony.png)

Note that while ratios are often pronounced as 'to' (e.g. 1:2 is 'one to two') - probabilities are often pronounced 'in'. (e.g. 2/13 is 'two in thirteen') This is because you can just multiply up. If you do something with a probability of success of 2/13 thirteen times, you'll expect to see it happen two of those times. So 'two successes in thirteen tries' - or just 'a two in thirteen chance'.

Notice my cheating, a couple of pages back? I used a rule without introducing it.

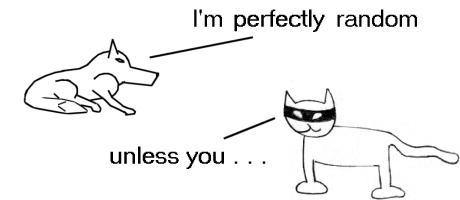

![[RandomCat -- Hi! I'm a cat!]](121_RandomCat.png)

Maths tends to be like that - to get anything done you have to mix a whole bunch of stuff together. Still, it's best to at least try and keep your feet on the ground.

![[Birdie -- wimp]](122_Birdie.png)

So, the unstated rule was that you can add a bunch of probabilities together.

And you can - sometimes. It isn't always safe. For example; flipping a coin. By our rules, the chances of a head are 1/2. But if we flip the coin twice - the chance isn't 1. It's possible to flip two tails in a row - so we're surely not certain to get a head.

It is safe when:

I'm not gonna prove that. You'll have to take it on trust. Fiddle about with some coins and stuff to convince yourself.

![[Birdie -- wimp]](122_Birdie.png)

Ok, fine. Let's do that rule.

![[Cat surrounded with bird feathers]](131_Birdie.png)

The full rule is:

![[Eqn. A = { a, b, ... }

P(A=a u A=b) = P(A=a) + P(A=b) <=> P(A=a n A=b) = 0]](132_Eqn.png)

First part - it has to be the same test. The coins example is a proof that the opposite isn't true - but it doesn't prove that it IS true for a 'same test'.

For that we need another example.

![[Box]](133_Box.png)

One cat. One box. The possible outcomes are 'the cat is in the box' (probability 1) and 'there are flying monkeys on the moons of jupiter' (probability zero) and a bunch of other impossible probabilities.

We already said that the total probability has to be one. So there's an example - it is at least sometimes true.

Now for the second part.

![[Two overlapping cats]](134_Cats.png)

If the two events 'a' and 'b' overlap, then it's not true any more. For example - let's have 'a' be "I rolled 4 or less" and 'b' be "I rolled 3 or more".

Now, if I roll a 3 or a 4, both a and b are true. So if we add them up then we have counted three and four twice. Which would add up to more than one. Since the probability can never be more than one, we know that's wrong.

On the other hand, we can add up EVERY possible 'a' and 'b' that way - and if ANY of them came to more than one, we'd know we counted something more than once.

![[FatCat -- That's Bad]](135_FatCat.png)

So the only way it can be safe to add them together is if they don't overlap.

. . .

Ok, that's a hand waving 'trust me' not a real explanation. It's all you're getting.

![[GeneriCat -- That's Bad]](136_GeneriCat.png)

So now we know what the chances are of drawing an Ace. What about those dice? TODO: With / without replacement. Do this BEFORE we do combination / permutation. To recap - we wanted to know what the chances are of getting a 12 on two dice. And by playing around we know it was about a third that of drawing an ace - which we now know is exactly 1/13. [CrapsCat -- About a 1/40? Come on baby...] We know what the chances are of rolling a six on one die - 1/6. So how do we get from that to rolling a 12 on two dice? Well, there's only one way to get a 12 - roll a six on one die, and a six on the other die. Now, unless we're cheating and aiming the second die at the first one - the number that comes up on the first die can't influence the number that comes up on the second die. We call these two die-roll events 'independent'. This is lucky. [LuckyCat -- Hi!] Because it lets us do this: Roll the first die. Now, unless we get a 6, then whatever comes up on the second die we can't win - so no point even looking. But that 1/6 of the time that it does come up a 6, we look at the second die. And 1/6 of the time THAT is a 6 as well, and we win. [GhandiCat -- First they ignore you, then they laugh at you, then they fight you, then you roll a double six and then....] So lets say we have, oh, I dunno, 72 throws. *On average* 1/6 of them have a 6 on the first die (72/6 = 12 throws). And then 1/6 of THOSE actually win. (12/6=2 - 2 in 72 are winning throws.) [LuckyCat -- I made that happen!] But we want the number out of 1, not out of 72. The same logic applies though - divide by six twice. [Eqn: P(A=6) * P(B=6) = 1/6 * 1/6 = 1/36 ] 15. Independent results. That is, in fact, a rule. The chances of some things happening are the chances of each individual thing happening, all multiplied together. But it only works if all of the events are completely separate - what we like to call 'independent'. I can show you why that's true with a little counterexample. [ SeeingEyeCat -- The disproof of your opposite is your friend, or something.] Let's say that we roll a die - and then draw that number of cards. What are the chances of getting an Ace? Well, the die roll is 1/6 - and then the probability of the second one is, uh... well I guess it depends on what I rolled on the die. It's going to be more if I rolled a six, and 1/13 if I only rolled a one. How can you even multiply those together? TODO: Have a discussion of this later, and show how you can still find the expected result by taking the average at each stage. Or, let's have a beauty competition. The 'most beautiful ear' competition. [Line up of cats and dogs, 4 cats, 2 dogs.] Ok, the judges are lazy. They pick an animal completely at random, then on that animal randomly pick an ear. What are the chances that we picked a cat and picked a floppy ear? The rule above would say '4 cats and out of 6 choices, so 4/5 (=2/3) - then there are 12 ears and 4 of them are floppy, so 4/12 (=1/3) of those. 2/3*1/3 = 2/9 - so two times in nine' But just look at them. Not a floppy eared cat in sight! How could our beauty judges pick the floppy eared cat as the best some of the time when there isn't one?! So obviously the rule is wrong. Or at least, it's wrong if the events depend upon one another. [Dead dog on wheels. Ed. -- In December 2005, Japanese dog show judges awarded the prize for most obedient dog to one which was dead and stuffed. The owner refused to return the prize money, stating that nowhere in the rules did it specify that the pet had to be alive. "Play dead, Fido. Stay. Goood dog." (URL) 16. 'given that' - Conditional probability. Right, so we need something a bit better. [4 cats, 2 dogs line-up repeated] What are the chances of picking a cat? Four in six (4/6 = 2/3) - or two thirds. [EM]Given that[/EM] we have chosen a cat, what are the chances of picking one with a floppy ear? [4 cats, one hiding its head under its paws -- Meanies!] None. Nothing. Nada. Not a sausage. [Cat eating a sausage -- Mmmf. Wha?] OK, that's sensible. We pretending-to-be-mathematicians people like to write that as: [MagiCat] [Eqn. P(B|A) = The probability of B happening, given that A happens] 17. (From pride - P(A|B) = P(A) when independent ) 18. (From pride - P(AnB) given A and B NOT independent) 19. (Why P(A|B)=p does not imply P(B|A)=p ) [HungryCat -- Give me curry, or I *will* eat your face ] [Badger -- Maybe I'll eat your face just for fun] Right. We have two separate events here - feeding the cat, and having my face eaten. But they're not independent. Let's say I'm lazy, have a 4/5 chance of having my face eaten. (Lazy *and* cruel, or at least unusual.) And, by bitter experience (badgers are much more careful in their face eating than cats), I know that the chance of forgetting to feed the cat is 1/4. Consequently, I wear paper bags over my head a lot. TODO: This wordy bit is crap. [Badger -- Dat's right, and I aint saying, neither.] Let's call 'F' the event of having my face eaten, and 'C' the event of feeding curry to my cat. (!C is, then, the one to watch out for.) 'b' stands for 'badger' - the chance that the badger eats my face. We don't know what it is, but we know it's there. P(F|!C) = 1. [HungryCat -- I just said that! Now feed me!] But what is P(!C|F)? We can draw out the whole table: Result Eaten Face Face OK Total Curry? Yes b 1-b 1 No 1 0 1 Total 1+b 1-b 2 Looking at the table, then: P(F) = ( 1+b ) / 2 We know that P(F and !C) = P(F|!C) * P(!C) = 1 * 1/4 = 1/4. But we also know that P(F and !C) = P(!C|F) * P(F) So 1/4 = P(!C|F) * (1+b)/2 P(!C|F) = (1/4) / ( (1+b)/2 = 2 / 4*(1+b) = 1/2*(1+b) So, with my face eaten - the chances that the cat did it are nothing like the chances of the cat eating my face, given I forgot to feed it. So. Bear that in mind. They might look similar, but P(A|B) is nothing like P(B|A). Unless the events are independent. (And that's another check for independence - though not a certain one. They can just happen to be the same) TODO: Example of that [HungryCat -- Banzai!!!!!] 20. ( P(AnB) = P(BnA) -- just checking! ) TODO: {Include that if they are independent, it doesn't matter which order you look at them in - but if they are dependant it might} TODO: {include dice example - P(A=6) * P(B=6|A=6) + P(B=6) * P(A=6|B=6) / nr of orders of counting (=2) so = the number we first thought of. } 21. So: P(AnB) = P(A).P(B) <=> A and B are 'independent events' An alternate formulation of independence - we can use it as a test. TODO: Add cartoons 30-??. Combinations / Permutations and probability. {Example to use - guessing a simple substitution cipher - they can't possibly be any harder than this. Quick mention of why they are, in fact, easier to break.} 26P26 possible combinations. "Are you psychic?" - Hide three kittens in exploding boxes. Give you a minute or two to rescue kittens - x boxes, y kittens, you can open z boxes. What are the chances that you rescue all the kittens? xCz possible different sets of boxes you could choose. The kittens could be arranged inside YOUR boxes zCy ways. But the kittens are arranged inside the boxes in xCy ways. Note the trick - it doesn't matter WHAT boxes you choose - you might as well always choose the first boxes you come to. You're just as likely to be right. You'll also look really confident and they'll be greatly impressed. [Exploding kitten -- Unless you guess wrong!] This works with lottery numbers, too. Assuming that the machines are fair, any set of numbers you pick are just as likely to win. But if you pick numbers that NO ONE ELSE DID, then you win more than if you pick numbers lots of people pick. (Because you'll have to share the prize.) So you need to pick numbers that SEEM unlikely. 33,34,35,36,37,38,39 - for example. It hasn't happened, but it's just as likely as, for example, 7,11,15,21,45,47 which did. But I bet less people picked it. In addition, since a lot of people pick 'lucky' numbers, and numbers based on their birth-date, you should probably avoid the numbers 1-33 (months of the year, days in the month etc.) You can BUY books for real cash money which try to sell you that advice. Ah heck, lotteries are a good way to explain probability. And ambling is where we started, anyway. ??. Recalculating a probability when there's one less option. Let's have five foxes. Each fox has four socks (and a mask and a brush, but never mind those) Take away one fox - and redistribute the socks evenly. [Five legged fox -- Don't try this at home, kids.] Five socks each. That's pretty simple. If things were evenly distributed before, they stay evenly distributed when you take away one of the options. But let's try a slightly more complicated example. We've got a mouse factory and some hungry cats. One has a huge funnel and catches many mice. The other three have smaller funnels, (each the same size) and catch fewer mice. [Cat funnel, mouse factory picture] 120 mice have fallen down so far. One cat has caught 60 mice, the other three 20 each. So the probability of a mouse falling down being eaten by them is: P(mouse gets eaten by fat cat) = 60/120 = 1/2 P(mouse gets eaten by smaller cat) = 20/120 = 1/6 What happens if the cat on the end (the smallest cat) gets full and redirects his funnel into the top? (It's silly to let mice go to waste, after all) Imagine we're throwing 120 more mice in. (Ignore the fact that even a lion would explode after eating this many mice.) 20 of those mice will go to the smallest cats funnel and loop around. We'll forget about them and concentrate on the 100 that go to the other cats. Well - those will go (on average) the same way the first set of 120 went. 60 to the first cat, 20 to each of the other 2. Then we can throw the 20 that looped around in, and they'll split up the same way. P(mouse gets eaten by fat cat) = 60/100 = 3/5 P(mouse gets eaten by smaller cat) = 20/100 = 1/5 So the 20 slip down - 20 * 3/5 = 12 to the fat cat, and 4 to each of the smaller cats. Now - let's do this with probability. Instead of 120 - we've got '1'. Instead of 120-20, we've got '1-1/6 = 5/6' 60/120 became 60/100. So to resize the probabilities after the smallest cat leaves, multiply by the old total (which is always 1, so do nothing) and then divide by the new 'total' (which is 5/6 - to divide by 5/6 is the same as multiplying by 6/5, if that helps...) [Eqn. (1/2) / (1- 1/6) = (1/2) / (5/6) = (1/2) * (6/5) = (1*6)/(2*5) = 6/10 = 3/5 ] Whaddya know! It came out the same! So now we know how to do it, we can generalise to any probability. Let's have p1,p2,p3,...,pn be the probabilities which are staying. r (p_n+1_) is the probability that we are going to remove. q1,q2,q3,...,qn are the resulting probabilities after removing r. So, to find a new probability, divide by the old total (without the one we are removing) [Eqn. qi = pi / (Sigma pi) ] We can tidy this up a bit, by noticing an easier way to write [Eqn. Sigma xi ] [Eqn. (Sigma pi) + r = 1 => (Sigma pi) = 1-r = !r ] Which gives us the final result: [Eqn. qi = pi / !r ] [Eqn. pi = 1/2, r = 1/6 => qi = (1/2)/(5/6) = (6/10) = 3/5 ] as before. Now it's your turn. Prove these: [Eqn. (Sigma qi) = 1] -- Proves that z is still a valid probability, it adds up to 1. [Eqn. pa/pb = qa/qb ] -- Proves that if one cat ate three times as much as another before their sibling left, they still do after. ---- Answers: 1. [Eqn. Sigma qi = Sigma ( pi/!r) ) = (Sigma pi) / !r = !r/!r = 1 ] a) Uses [Eqn. Sigma (x*c) = (Sigma x)*c ] b) Uses [Eqn. (Sigma pi) + r = 1 => (Sigma pi) = !r ] from above 2. Substitute p's into the q's, using [Eqn. qi = pi / !r ] [Cat -- Mind your manners!] Gives [Eqn. qa/qb = (pa/!r) / (pb / !r ) ] Which simplifies to: [Eqn. pa/pb = ( pa * !r ) / ( pb * !r ) ] Which you can then just cancel out the !r parts. ------------ ??. Unless you're psychic and you know where the kittens are first. {The sodding 'open the box?' problem - competitor and doors. Guhhh....} One of the ways we can tell that science is working is that we can derive an unexpected result from the science - and then go out into the world and see that it is true. This helps convince us that our initial assumptions were good ones. If we can go out into the world and say "This is not true" - then either we made a mistake in our working, or our theories are wrong and we need to fix them. Either is a good thing to discover! So, welcome to 'unexpected result in probability' number one: What's behind door number three? Here's the set-up. [TODO: Piccie] It's a gygaxian game show or something, complete with a compere. There are three doors. You have to choose to open one, and only one. Behind one of the doors is an empty chest guarded by a grizzly bear. Behind one is a magical crown. Behind the last door is a swarm of angry killer bees. Now, obviously, you want to get the crown. And equally obviously, you want to not get eaten by a bear or stung by killer bees. So - choose a door. Three doors, no idea which is the right one - 1/3 chance of getting the prize. But this being a game show, the compere makes tutting noises. Imagine him as Chris Tarrant (it makes the next bit that much sweeter). "Are you sure?" he asks. "Is that your final answer?" Then, to try to convince you that you've made the wrong choice, he goes into one of the rooms that you didn't choose. There's a horrible scream - he's found one of the booby traps. You don't know which one, and you're not stupid enough to go and look. Now - the question is: Where is the crown most likely to be? Is it behind the door you chose, or behind the door that neither of you chose? Should you switch keep your selection, or should you switch? Now - common sense says "It doesn't matter! You made the choice, it's 1/3 chance to be behind any of the doors - now there's two doors, and it's 1/2 chance to be either one." But we're (pretending to be) mathematicians! We laugh in the face of common sense! And we'd rather not laugh in the face of killer bees (or grizzly bears). So let's work it out. [MagiCat -- Hahaha! I laugh!] Firstly, there's your door. We know that it had a 1/3 chance of being the right door. But then the compere opened another door - and showed us that it wasn't the treasure door. So it has a ZERO chance of being the right door, now. So we know that the remaining door has a 1/2 chance of being the right door (it might be your door, or it might be the door you chose.) But that doesn't add up! Until we use conditional probability. Call the door you first chose 'A'. Call the other doors 'B' and 'C'. We have to call them something, and we're not as imaginative as we were in Play-School. The full table is: Treasure is in: Door A Door B Door C Total Compere opens Door A (r1) 0 1/6 1/6 1/3 Door B (r2) 1/6 0 1/6 1/3 Door C (r3) 1/6 1/6 0 1/3 Total 1/3 1/3 1/3 1 Those are all the possible options - the compere opens a door, but not the one with the treasure behind it. It still looks equally likely that the compere will show us each door, and when he does the treasure is equally likely to be behind each of the others. But wait! We've only included him not showing us the treasure - but we also know that he doesn't open the door we choose. That changes things. The door 'A' row has to vanish - he can't do that. But it has to go somewhere. Add it to the other two rows. Which leads to this table: Treasure is in: Door A Door B Door C Total Compere opens Door B (r2) 1/6 0 1/3 1/2 Door C (r3) 1/6 1/3 0 1/2 Total 1/3 1/3 1/3 1 Good. He's equally likely to open either of the other two doors. That's what we wanted. But where is the treasure? Add up the columns. Door A = 1/3 Door B = 1/3. Door C = 1/3. Still equally likely to be in any of them. Which is also what we wanted. But now let's make that choice. If he opens door B, we can now rule door B out as having the treasure. (Add it to the other columns, just like before) Treasure is in: Door A Door C Total Compere opens Door B (r2) 1/6 1/3 1/2 Door C (r3) 1/2 0 1/2 Total 2/3 1/3 1 But we know that the compere opened door B - we just said so. (And we can say he opens door C, and do the adding up again (please do so) and find we get the same table, but with different column headings.) So we can just read the door B row - and see that we have a far better chance of the safe door being door C than it being the door (A) that you first chose. How strange! So let's test this out. First we'll need a load of rooms and doors, some magical crowns, a hundred gallons of honey to lure the bears and bees in... [Cat {quakes in fear}] ... or just do it with bits of paper. Ok. So it really does work this way. How odd? You'll have to take this on trust - proving this is in the realm of 'information theory' - but the answer is the compere. He opens the door that is not the prize - he KNOWS where the safe door is (and it's a shame he gets eaten or stung to death because otherwise you could just ask him) By his choice of door he's giving you a bit of information about the puzzle that you didn't have before. If you chose the wrong door, he's giving you more information ("This is the only other wrong door") than he does if you choose the right one ("This is one of two other doors that kill you") Of course, you don't know which he is saying (and can't ask - grizzly bears and killer bees and so on) so there's still some uncertainty. But a lot less than there was at the start of the puzzle. Q. What happens if the compere opens door C, instead of door B? A1. It all works just the same. Work it out for yourself. A2. And then slap yourself. Because the doors weren't labelled, remember? We came up with those names AFTER the compere got eaten. We could have called them 'our door' 'compere door' 'spare door' instead of A B and C. And then whichever doors the compere and ourselves had chosen, we could use those names. 3x. Buying multiple lottery tickets. OK. We're going to buy more than one ticket. What should we do to have the best expected winnings. TODO: Nooooooooo! 40. Expectation and deviation. Or: Losing slowly versus the chance of a big win. And why probability cannot tell you which is the right thing to do. 41. ????? 42. General comments. We like to reduce things to a number of equally likely results, and then count how many there are. We like to make sure that everything is independent - or reduce it to individual independent cases and analyse each separately.